Jacobi method

In numerical linear algebra, the Jacobi method is an algorithm for determining the solutions of a system of linear equations with largest absolute values in each row and column dominated by the diagonal element. Each diagonal element is solved for, and an approximate value plugged in. The process is then iterated until it converges. This algorithm is a stripped-down version of the Jacobi transformation method of matrix diagonalization. The method is named after German mathematician Carl Gustav Jakob Jacobi.

Contents |

Description

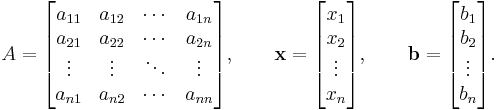

Given a square system of n linear equations:

where:

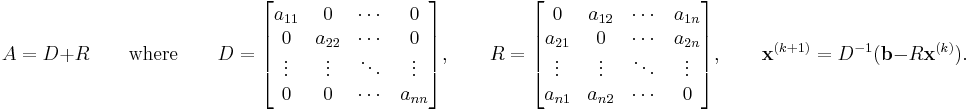

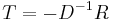

Then A can be decomposed into a diagonal component D, and the remainder R:

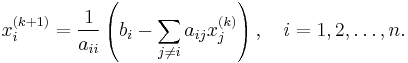

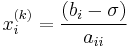

The element-based formula is thus:

Note that the computation of xi(k+1) requires each element in x(k) except itself. Unlike the Gauss–Seidel method, we can't overwrite xi(k) with xi(k+1), as that value will be needed by the rest of the computation. The minimum amount of storage is two vectors of size n.

Algorithm

Choose an initial guess  to the solution

to the solution

- while convergence not reached do

- for i := 1 step until n do

- for j := 1 step until n do

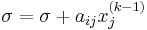

- if j != i then

- end if

- if j != i then

- end (j-loop)

- end (i-loop)

- check if convergence is reached

- for i := 1 step until n do

- end (while convergence condition not reached loop)

Convergence

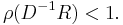

The standard convergence condition (for any iterative method) is when the spectral radius of the iteration matrix is less than 1:

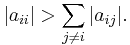

The method is guaranteed to converge if the matrix A is strictly or irreducibly diagonally dominant. Strict row diagonal dominance means that for each row, the absolute value of the diagonal term is greater than the sum of absolute values of other terms:

The Jacobi method sometimes converges even if these conditions are not satisfied.

Example

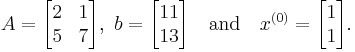

A linear system of the form  with initial estimate

with initial estimate  is given by

is given by

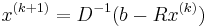

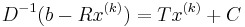

We use the equation  , described above, to estimate

, described above, to estimate  . First, we rewrite the equation in a more convenient form

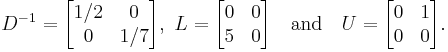

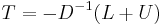

. First, we rewrite the equation in a more convenient form  , where

, where  and

and  . Note that

. Note that  where

where  and

and  are the strictly lower and upper parts of

are the strictly lower and upper parts of  . From the known values

. From the known values

we determine  as

as

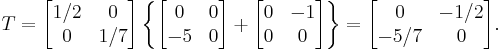

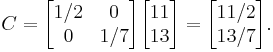

Further, C is found as

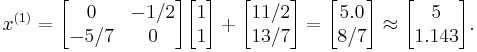

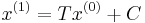

With T and C calculated, we estimate  as

as  :

:

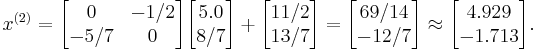

The next iteration yields

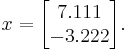

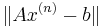

This process is repeated until convergence (i.e., until  is small). The solution after 25 iterations is

is small). The solution after 25 iterations is

See also

- Gauss–Seidel method

- Successive over-relaxation

- Iterative method. Linear systems

- Gaussian Belief Propagation

External links

- This article incorporates text from the article Jacobi_method on CFD-Wiki that is under the GFDL license.

- Black, Noel; Moore, Shirley; and Weisstein, Eric W., "Jacobi method" from MathWorld.

- Jacobi Method from www.math-linux.com

- Module for Jacobi and Gauss–Seidel Iteration

- Numerical matrix inversion

|

||||||||||||||